Responsible AI Guidelines

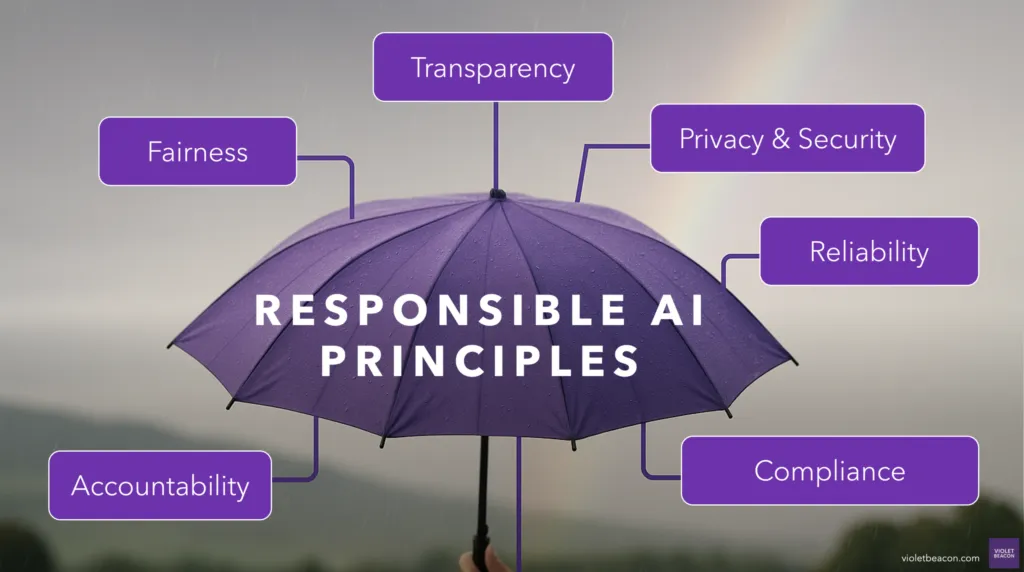

Principles for building AI conscientiously throughout its entire lifecycle. These guidelines reflect our commitment to thoughtful, human-centered AI adoption.

What Is Responsible AI?

Responsible AI is artificial intelligence that is built conscientiously throughout its entire lifecycle, from concept and design through deployment, monitoring, and retirement.

It involves a set of principles and practices that ensure AI systems serve the people who depend on them. It means asking the right questions before, during, and after implementation, and being willing to slow down when the stakes are high.

Official Definitions of Artificial Intelligence

An AI system is a machine-based system that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments. Different AI systems vary in their levels of autonomy and adaptiveness after deployment.

oecd.ai/en/wonk/definition

A machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations, or decisions influencing real or virtual environments.

csrc.nist.gov/glossary/term/artificial_intelligenceResponsible AI is about making deliberate, informed choices at every stage, so that AI works for the people it's meant to serve.

Benefits of Responsible AI

Adopting responsible AI practices creates measurable advantages across your organization.

What Is an AI System?

Understanding the three core components helps you make better decisions about governance, risk, and implementation.

Hardware

Compute & Infrastructure

Software

Models & Algorithms

Data

Inputs & Context

Key Practice Areas

These are the areas where responsible AI principles become daily practice.

Transparency means being open about when and how AI is used in your organization. Explainability means ensuring that AI decisions can be understood by the people they affect.

This includes documenting which tools are in use, what data they access, how outputs are generated, and how decisions are reviewed. When AI is part of a customer-facing process, people have the right to know.

Not every AI model is appropriate for every task. Model choice involves selecting tools whose capabilities, limitations, and training data align with your specific needs and ethical standards.

Alignment means ensuring the model's behavior matches your organization's values, including how it handles edge cases, sensitive topics, and areas where it should defer to human judgment.

Guardrails are the constraints you put around AI systems to keep them operating within safe, intended boundaries. This includes input validation, output filtering, usage limits, and human-in-the-loop checkpoints.

Safety planning also means defining what happens when something goes wrong: who gets notified, how the system is paused, and how errors are corrected.

AI systems often require access to sensitive data. Responsible AI means understanding what data your tools collect, where it's stored, who has access, and whether your usage complies with regulations like GDPR, HIPAA, and emerging AI-specific laws.

Privacy isn't just a legal requirement. It's a trust requirement. People need to know their data is handled with care.

Deployment isn't the finish line. It's where responsible AI practices become most critical. Thoughtful deployment means piloting before scaling, monitoring outputs continuously, and having clear processes for feedback and course correction.

It also means being honest about what AI can and cannot do, setting realistic expectations with your team and clients, and being willing to pull back when something isn't working.

Oversight and Governance

Responsible AI requires ongoing human oversight. Whether through a formal committee or a designated role, these are the key responsibilities.

Identifying Opportunities

Evaluate where AI can add genuine value without introducing unnecessary risk or complexity.

Developing Governance Protocols

Create policies, usage guidelines, and decision-making frameworks that apply across the organization.

Monitoring Implementation

Track how AI systems are performing, whether they're being used as intended, and where adjustments are needed.

Evaluating Impact

Assess the real-world effects of AI on people, processes, and outcomes, and adjust course when needed.

Fostering Education and Buy-In

Build AI literacy across your organization so that responsible practices are understood, supported, and sustained.

What to Look For: A Practical Checklist

Before deploying any AI tool, ask yourself these questions.

Do I know what data this tool uses, and is it handled securely?

Can I explain to a client or employee what the AI is doing?

Is a human reviewing AI outputs before they reach customers?

Does this tool make my team more capable, or replace judgment I want humans exercising?

If something goes wrong, do I know how to turn it off or correct it?

Risks to Monitor

Even well-intentioned AI adoption carries risks. Awareness is the first step toward prevention.

Ready to Put These Principles into Practice?

Whether you're just starting with AI or looking to strengthen your existing approach, we can help you build a responsible foundation.