What is Responsible AI?

A plain-language guide for organizations that want to use AI well. Understand the principles, avoid the risks, and build AI systems your team actually trusts.

What Does "Responsible AI" Actually Mean?

Responsible AI refers to the practice of developing and using artificial intelligence in ways that are ethical, transparent, accountable, and aligned with human values.

The practical version: responsible AI means you know what your AI is doing and why, you can explain it to your team and clients, you've thought about what could go wrong, and you've made deliberate choices about when AI should and shouldn't be making decisions.

It's the difference between deploying AI because everyone else is, and deploying AI because you've thought it through.

- ✓You know what your AI is doing and why

- ✓You can explain it to your team and clients

- ✓You've thought about what could go wrong

- ✓Humans stay in the loop for decisions that matter

- ✓You've made deliberate choices about when AI should and shouldn't decide

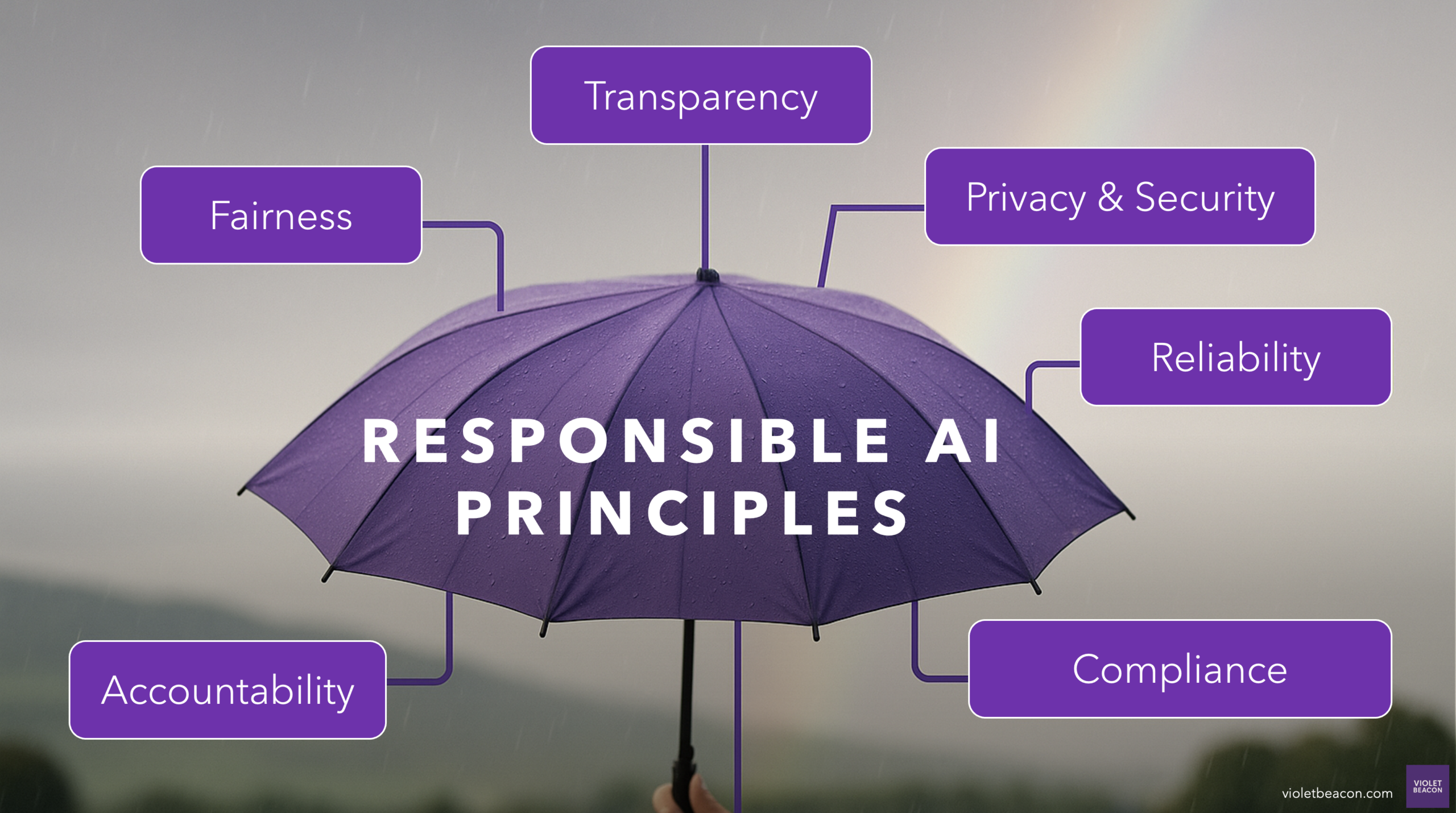

The 6 Principles of Responsible AI

Responsible AI is an umbrella term for AI that is implemented conscientiously at every stage — a set of principles that ensure AI serves people with thoughtful choices about which problems it solves, how it decides, and how its impacts are measured and managed.

Transparency

Explain how your AI tools work and what data they use. Be honest about when AI is involved.

Fairness

Actively check whether your tools produce fair outcomes. Correct course when they don't.

Privacy & Security

Know what data your tools collect, where it goes, and whether you comply with GDPR, HIPAA, etc.

Accountability

Someone owns every AI decision. Humans stay in the loop for what matters.

Reliability

Test against known-good results, monitor for drift, and have clear processes for when things go wrong.

Compliance

Build ISO 42001, GDPR, and internal policy compliance into your AI lifecycle from the start.

Download Our Responsible AI Guidelines

A practical reference for teams building AI policies. Covers transparency, fairness, privacy, accountability, reliability, and compliance.

Download the GuidelinesResponsible AI Isn't Just an Ethics Issue.

It's a Business Issue

Irresponsible AI adoption creates real business risk. A tool that produces inaccurate outputs — and nobody catches them — damages your reputation. An AI system that handles client data without proper security creates legal liability. A team that doesn't understand or trust their AI tools will quietly work around them.

Businesses that adopt AI thoughtfully tend to see better adoption rates, fewer costly mistakes, and AI systems that actually keep working six months after launch.

Put responsible AI into practice

We help organizations turn these principles into governance frameworks, team training, and compliant AI systems.

Want Help Getting AI Right the First Time?

Start with a conversation. We'll tell you what responsible AI adoption looks like for your specific situation, and whether we're the right fit to help.