If AI Can Teach Us Anything, Why Do We Still Follow People?

Table of Contents

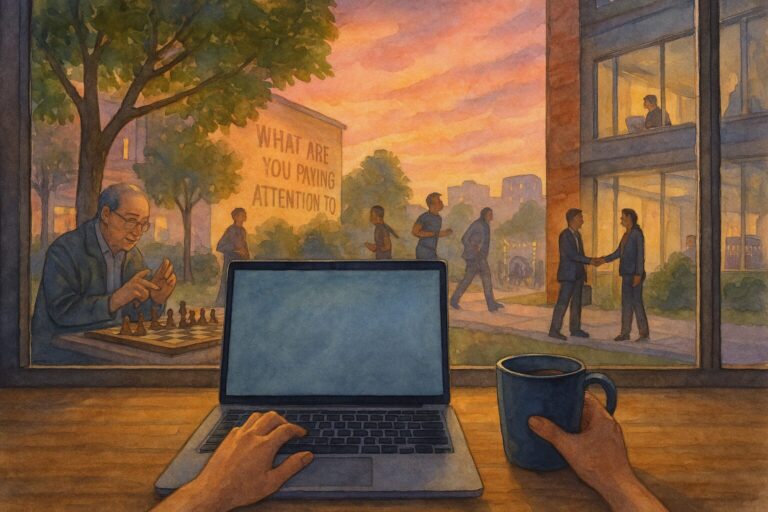

I’ve been thinking about this question a lot lately. We have AI tools that can explain quantum physics, write poetry, debug code, and generate business plans in seconds. So why do we still show up to conferences? Why do we still seek out mentors? Why does a great teacher still change lives in ways that no chatbot can?

The answer, I think, tells us something essential about what AI can’t replace — and why that matters for how we build and deploy these tools.

The Paradox of Infinite Knowledge

We live in a moment where information is essentially free and unlimited. AI has made that even more true. You can ask Claude or ChatGPT virtually anything and get a coherent, detailed response in seconds.

And yet, people are attending more conferences than ever. Podcast listenership keeps growing. Mentorship programs have waiting lists. Book clubs are thriving.

If information were all we needed, this wouldn’t make sense. But information was never the whole picture.

What We Actually Get from People

Presence

There’s something about being in the same room with someone who has done the thing you’re trying to do. Their presence communicates something that text on a screen cannot — that this is real, that it’s possible, that the struggle is normal.

AI can simulate empathy. It can generate encouraging responses. But it can’t sit across from you and let a long pause hold space for the thing you haven’t figured out how to say yet.

Story

Humans are wired for narrative. We don’t just want information — we want the story of how someone got from confusion to clarity, from failure to insight, from “I have no idea what I’m doing” to “here’s what I learned.”

AI can summarize. It can organize. But it doesn’t have its own story. Every response is generated, not lived. And we can feel the difference.

Accountability

When you tell another person your plan, something shifts. There’s a social contract — subtle, unspoken, but real. You’re more likely to follow through. You’re more likely to push past the resistance.

AI doesn’t hold you accountable. You can close the tab. You can ignore the output. There’s no relationship at stake.

Taste and Judgment

A great teacher doesn’t just give you information. They tell you what to ignore. They’ve been through enough to know which details matter and which are noise. They’ve developed taste — a sense for what’s important that comes from experience, not data.

AI can synthesize vast amounts of information, but it can’t tell you what matters to you, in your context, at this moment in your career. That requires the kind of judgment that only comes from lived experience.

What This Means for AI Adoption

I’m not writing this to argue against AI. I use AI tools every day. They make me faster, more thorough, and more creative.

But I think this question — why we still follow people — has real implications for how organizations adopt AI:

1. Don’t automate mentorship. AI can help with onboarding documentation, FAQ bots, and training materials. But the mentor relationship — the one that shapes careers and builds loyalty — that’s human. Protect it.

2. Don’t confuse information delivery with education. Giving someone access to an AI tutor is not the same as investing in their development. Learning happens in relationship, in struggle, in the back-and-forth of trying, failing, and being met with patience.

3. Don’t replace judgment with output. AI can generate ten versions of a marketing strategy. But knowing which one to choose — that’s judgment. And judgment comes from experience, values, and context that no model has.

4. Keep AI in its lane. AI is extraordinary at processing, generating, and organizing information. It’s not a leader. It’s not a mentor. It’s not a colleague. When we ask it to be those things, we diminish both the tool and the relationships.

The Human Element

At Violet Beacon, we talk a lot about keeping the human element at the center of AI adoption. This is part of what we mean.

The most responsible thing you can do with AI is be honest about what it’s for — and what it’s not for. It’s a powerful tool. It’s not a person. And the gap between those two things is where the most important parts of work and life happen.

People don’t follow AI. They follow people. The best AI implementations make that clearer, not muddier.

How AI Was Used in This Post

AI assisted with drafting and editing this article. The ideas, perspective, and personal reflections are entirely human. All contributions were reviewed to ensure a human-centered tone.

Frequently Asked Questions

Because mentorship provides four things AI cannot: presence (being in the room with someone who has done it), story (lived experience rather than generated text), accountability (a real relationship with stakes), and taste (the judgment to know what matters in your specific context). Information alone was never the whole picture.

No. AI can help with onboarding documentation, FAQ bots, and training materials. But the mentor relationship -- the one that shapes careers and builds loyalty -- is fundamentally human. Organizations should protect mentorship while using AI to handle information delivery.

It means being honest about what AI is for and what it is not. AI excels at processing, generating, and organizing information. It is not a leader, mentor, or colleague. The most responsible approach is to keep AI in its lane and protect the human elements that matter most -- judgment, connection, and accountability.

Thinking about AI adoption for your team?

Our AI Readiness Assessment helps you understand where to start — with a focus on the human elements, not just the technology.

Take the AI Readiness Assessment